To understand the magnitude of the MindVLA announcement, one must first understand the powerhouse behind it. Li Auto is not just another EV startup; it is a dominant force in the Chinese premium electric vehicle market. Founded in 2015 by entrepreneur Xiang Li, the company has carved out a massive niche by focusing on Extended-Range Electric Vehicles (EREVs) and high-end battery electric vehicles (BEVs) designed for families.

Unlike many competitors who struggled with scaling, Li Auto has seen meteoric growth. According to their March 2026 delivery update, the company continues to break records, delivering tens of thousands of vehicles monthly. However, their hardware is only half the story. The real “moat” Li Auto is building lies in its software stack, specifically its push toward an End-to-End (E2E) autonomous driving philosophy that mimics human intuition rather than rigid, hand-coded logic.

Understanding MindVLA: Perception Without a Script

At the most recent NVIDIA GTC, Li Auto pulled back the curtain on MindVLA. To the uninitiated, it sounds like another acronym in the alphabet soup of automotive tech, but for the industry, it represents a generational leap in Embodied AI.

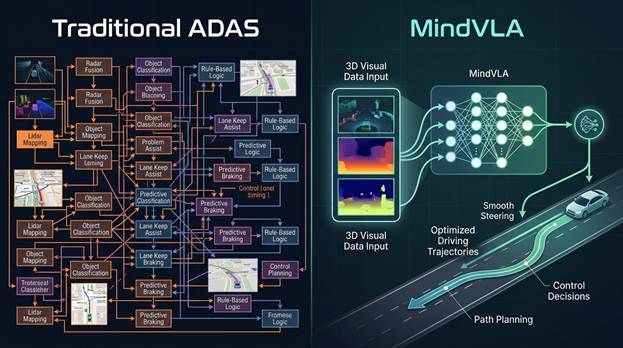

MindVLA stands for Vision-Language-Action. Traditionally, Advanced Driver Assistance Systems (ADAS) relied on modular stacks: one part of the code detected lines, another detected cars, and a third—the “planner”—decided how to steer based on a pre-loaded High-Definition (HD) Map. If the map was outdated or the road layout had changed, the system would fail or “disengage.”

MindVLA discards the crutch of HD mapping. It utilizes a 3D Vision Transformer (ViT) Encoder to perceive the world in true spatial depth. Instead of just seeing a flat image and guessing distance, the model understands the volume, velocity, and intent of everything in its field of view. By integrating a Large Language Model (LLM) framework, the car doesn’t just “see” a stop sign; it understands context. It can read temporary construction signs, interpret hand gestures from a traffic officer, and navigate “unmapped” rural roads with the same confidence as a major highway.

The Death of the “If-Then” Logic

The previous generation of AI driving was “heuristic-based.” It followed rules: If a car is in front, then slow down. MindVLA is built on Transformer architecture, the same tech behind ChatGPT. This means the raw sensor data goes into the neural network, and driving commands (steering, braking) come out the other side.

The breakthrough here is the ability to handle “corner cases”—those bizarre, once-in-a-year events that programmers can’t predict. Because MindVLA has been trained on massive datasets of human driving behavior, it has developed a form of “common sense.” It understands that a ball rolling into the street likely means a child is following it, even if it doesn’t “see” the child yet. This level of Predictive Analytics was effectively impossible in the ADAS systems of just a few years ago.

The State of Autonomy: Where Do We Stand?

General sentiment regarding autonomous driving has shifted from “it’s coming next year” to a more cynical outlook. This skepticism is fueled by the struggles of robotaxi firms and the slow rollout of SAE Level 3 systems in the West.

However, the “trough of disillusionment” is ending. We are moving from Level 2+ (hands-on, eyes-on) to true environmental autonomy. The industry has realized that the bottleneck wasn’t the sensors—Lidar and cameras are now excellent—but the computational brain. MindVLA represents the shift toward a software-defined vehicle that learns from experience rather than just following a script.

Is NVIDIA Still the King of the Hill?

The short answer is yes, but the reasons have moved beyond simple chip speed. While competitors like Tesla use in-house chips, and firms like Huawei offer compelling hardware, NVIDIA’s dominance is anchored in the DRIVE Thor platform and NVIDIA Omniverse.

Li Auto’s MindVLA runs on NVIDIA silicon because it requires massive Floating Point Operations (FLOPS) to process 3D transformers in real-time. But NVIDIA’s greatest advantage is Simulation. Before MindVLA ever touched a real road, it “drove” billions of miles in a digital twin of the world.

NVIDIA’s Omniverse Cloud allows Li Auto to recreate dangerous scenarios—near-misses, extreme weather, and sensor failures—safely in a virtual environment. Competitors are struggling to match this “data factory” approach. NVIDIA isn’t just selling a processor; they are selling the entire AI Infrastructure where the future of driving is synthesized.

When Can You Buy It?

The rollout of MindVLA is not a distant dream. Li Auto has already begun integrating these models into their “AD Max” platforms. Vehicles such as the Li L9, L8, and the ultra-aerodynamic Li MEGA MPV are the primary candidates for this technology.

As for the timeline, Li Auto is currently pushing “Mapless NOA” (Navigate on ADAS) to its fleet in China via Over-the-Air (OTA) updates. By late 2025 and early 2026, MindVLA is expected to be the standard operating logic for their high-end trims. While the current geopolitical climate and automotive tariffs make a US launch unlikely in the immediate future, the technology itself will force Western automakers to accelerate their own development to remain competitive on the global stage.

Wrapping Up

The unveiling of MindVLA at NVIDIA GTC marks a pivot point in automotive history. We are witnessing the transition from cars that “follow rules” to cars that “understand the world.” Li Auto has proven that by leveraging NVIDIA’s massive computational power and simulation tools, they can bypass the need for expensive, high-maintenance HD maps. This creates a driving experience that is more human, more adaptable, and ultimately safer. While the West remains focused on the promises of the past, Li Auto and NVIDIA are quietly delivering the 3D-perceptive reality of the future.

Disclosure: Images rendered by Artlist.io

Rob Enderle is a technology analyst at Torque News who covers automotive technology and battery developments. You can learn more about Rob on Wikipedia and follow his articles on TechNewsWord, TGDaily, and TechSpective.

To understand the magnitude of the MindVLA announcement, one must first understand the powerhouse behind it. Li Auto is not just another EV startup; it is a dominant force in the Chinese premium electric vehicle market. Founded in 2015 by entrepreneur Xiang Li, the company has carved out a massive niche by focusing on Extended-Range Electric Vehicles (EREVs) and high-end battery electric vehicles (BEVs) designed for families.

Unlike many competitors who struggled with scaling, Li Auto has seen meteoric growth. According to their March 2026 delivery update, the company continues to break records, delivering tens of thousands of vehicles monthly. However, their hardware is only half the story. The real “moat” Li Auto is building lies in its software stack, specifically its push toward an End-to-End (E2E) autonomous driving philosophy that mimics human intuition rather than rigid, hand-coded logic.

Understanding MindVLA: Perception Without a Script

At the most recent NVIDIA GTC, Li Auto pulled back the curtain on MindVLA. To the uninitiated, it sounds like another acronym in the alphabet soup of automotive tech, but for the industry, it represents a generational leap in Embodied AI.

MindVLA stands for Vision-Language-Action. Traditionally, Advanced Driver Assistance Systems (ADAS) relied on modular stacks: one part of the code detected lines, another detected cars, and a third—the “planner”—decided how to steer based on a pre-loaded High-Definition (HD) Map. If the map was outdated or the road layout had changed, the system would fail or “disengage.”

MindVLA discards the crutch of HD mapping. It utilizes a 3D Vision Transformer (ViT) Encoder to perceive the world in true spatial depth. Instead of just seeing a flat image and guessing distance, the model understands the volume, velocity, and intent of everything in its field of view. By integrating a Large Language Model (LLM) framework, the car doesn’t just “see” a stop sign; it understands context. It can read temporary construction signs, interpret hand gestures from a traffic officer, and navigate “unmapped” rural roads with the same confidence as a major highway.

The Death of the “If-Then” Logic

The previous generation of AI driving was “heuristic-based.” It followed rules: If a car is in front, then slow down. MindVLA is built on Transformer architecture, the same tech behind ChatGPT. This means the raw sensor data goes into the neural network, and driving commands (steering, braking) come out the other side.

The breakthrough here is the ability to handle “corner cases”—those bizarre, once-in-a-year events that programmers can’t predict. Because MindVLA has been trained on massive datasets of human driving behavior, it has developed a form of “common sense.” It understands that a ball rolling into the street likely means a child is following it, even if it doesn’t “see” the child yet. This level of Predictive Analytics was effectively impossible in the ADAS systems of just a few years ago.

The State of Autonomy: Where Do We Stand?

General sentiment regarding autonomous driving has shifted from “it’s coming next year” to a more cynical outlook. This skepticism is fueled by the struggles of robotaxi firms and the slow rollout of SAE Level 3 systems in the West.

However, the “trough of disillusionment” is ending. We are moving from Level 2+ (hands-on, eyes-on) to true environmental autonomy. The industry has realized that the bottleneck wasn’t the sensors—Lidar and cameras are now excellent—but the computational brain. MindVLA represents the shift toward a software-defined vehicle that learns from experience rather than just following a script.

Is NVIDIA Still the King of the Hill?

The short answer is yes, but the reasons have moved beyond simple chip speed. While competitors like Tesla use in-house chips, and firms like Huawei offer compelling hardware, NVIDIA’s dominance is anchored in the DRIVE Thor platform and NVIDIA Omniverse.

Li Auto’s MindVLA runs on NVIDIA silicon because it requires massive Floating Point Operations (FLOPS) to process 3D transformers in real-time. But NVIDIA’s greatest advantage is Simulation. Before MindVLA ever touched a real road, it “drove” billions of miles in a digital twin of the world.

NVIDIA’s Omniverse Cloud allows Li Auto to recreate dangerous scenarios—near-misses, extreme weather, and sensor failures—safely in a virtual environment. Competitors are struggling to match this “data factory” approach. NVIDIA isn’t just selling a processor; they are selling the entire AI Infrastructure where the future of driving is synthesized.

When Can You Buy It?

The rollout of MindVLA is not a distant dream. Li Auto has already begun integrating these models into their “AD Max” platforms. Vehicles such as the Li L9, L8, and the ultra-aerodynamic Li MEGA MPV are the primary candidates for this technology.

As for the timeline, Li Auto is currently pushing “Mapless NOA” (Navigate on ADAS) to its fleet in China via Over-the-Air (OTA) updates. By late 2025 and early 2026, MindVLA is expected to be the standard operating logic for their high-end trims. While the current geopolitical climate and automotive tariffs make a US launch unlikely in the immediate future, the technology itself will force Western automakers to accelerate their own development to remain competitive on the global stage.

Wrapping Up

The unveiling of MindVLA at NVIDIA GTC marks a pivot point in automotive history. We are witnessing the transition from cars that “follow rules” to cars that “understand the world.” Li Auto has proven that by leveraging NVIDIA’s massive computational power and simulation tools, they can bypass the need for expensive, high-maintenance HD maps. This creates a driving experience that is more human, more adaptable, and ultimately safer. While the West remains focused on the promises of the past, Li Auto and NVIDIA are quietly delivering the 3D-perceptive reality of the future.

Disclosure: Images rendered by Artlist.io

Rob Enderle is a technology analyst at Torque News who covers automotive technology and battery developments. You can learn more about Rob on Wikipedia and follow his articles on TechNewsWord, TGDaily, and TechSpective.